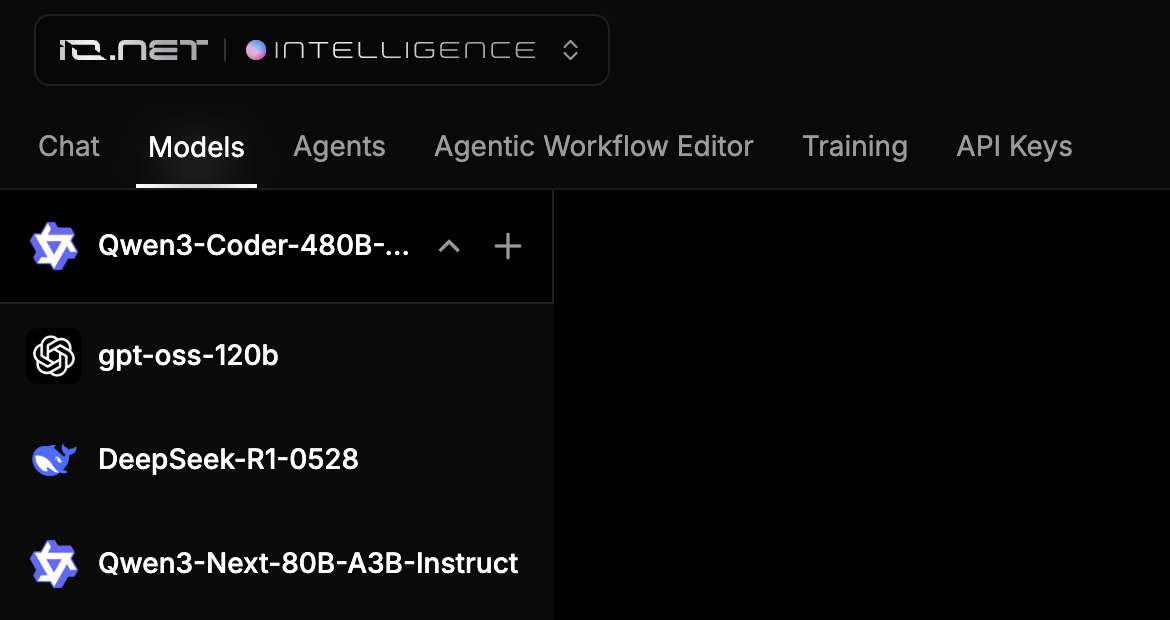

To get started, navigate to the Models tab.Documentation Index

Fetch the complete documentation index at: https://io.net/docs/llms.txt

Use this file to discover all available pages before exploring further.

What you can do on the Models Dashboard:

- Browse the list of models with their context lenghts and prices.

Popular AI Models

| Model Name | Developer | Description |

|---|---|---|

| deepseek-ai/DeepSeek-R1-0528 | Deepseek | Enhanced model with improved reasoning, inference, and algorithmic post-training optimizations; designed for high-accuracy tasks. |

| meta-llama/Llama-4-Maverick-17B-128E-Instruct-FP8 | Meta AI | Multimodal instruction-tuned model leveraging a mixture-of-experts (MoE) architecture for top-tier performance in both text and image understanding. |

| gpt-oss-120b | Open AI | Open-weight 117B parameter Mixture-of-Experts model supporting 128k context, advanced reasoning via chain-of-thought, optimized for real-world tool use, coding, and efficient local or cloud deployment. |

| Intel/Qwen3-Coder-480B-A35B-Instruct-int4-mixed-ar | Qwen | High-capacity, instruction-tuned code generation model optimized with INT4 mixed-precision for fast inference, designed for complex programming tasks on Intel hardware. |

| Qwen3-Next-80B-A3B-Instruct | Qwen | High-capacity model optimized for instruction following and knowledge-intensive tasks. |

| gpt-oss-20b | Open AI | Open-source GPT-style model suitable for text generation and general-purpose tasks. |

| Qwen3-235B-A22B-Thinking-2507 | Qwen | Powerful 235B-parameter language model optimized for deep reasoning, planning, and complex multi-step tasks. |

| Mistral-Nemo-Instruct-2407 | Mistral AI | Instruction-tuned model focusing on efficient reasoning and NLP tasks. |

| meta-llama/Llama-3.3-70B-Instruct | Meta AI | Large-scale transformer model fine-tuned for instruction-following, aligning responses with human preferences. |

| mistralai/Mistral-Large-Instruct-2411 | Mistral AI | Large instruction-tuned model offering strong general-purpose reasoning, summarization, and assistant-style responses. |

| Qwen/Qwen2.5-VL-32B-Instruct | Qwen | Powerful vision-language model trained to follow multimodal instructions, suitable for image understanding, captioning, and reasoning. |

| meta-llama/Llama-3.2-90B-Vision-Instruct | Meta AI | Vision-language model with instruction tuning, capable of image analysis, visual Q&A, and multimodal dialogue generation. |

| BAAI/bge-multilingual-gemma2 | BAAI | Multilingual embedding model optimized for semantic search and retrieval tasks across diverse languages. |

| zai-org/GLM-4.6 | Z.AI | Advanced large-language model that expands context capacity to 200K tokens and significantly enhances coding, reasoning, and agentic capabilities. It excels in real-world coding tools, delivering more natural, human-aligned outputs. |

| zai-org/GLM-4.7 | Z.AI | Advanced large-language model that retains a 200K-token context window and elevates coding, reasoning, and agentic capabilities with enhanced multi-step execution and consistency. It introduces sophisticated thinking modes like Preserved Thinking and Turn-level Thinking. |

| moonshotai/Kimi-K2-Instruct-0905 | Moonshot AI | A state-of-the-art mixture-of-experts (MoE) language model, featuring 32 billion activated parameters and a total of 1 trillion parameters. It delivers exceptional reasoning, coding, and content-generation performance. |

| moonshotai/Kimi-K2-Thinking | Moonshot AI | A high-performance open-source thinking model built for step-by-step thinking and dynamic tool use. It achieves state-of-the-art results on benchmarks such as Humanity’s Last Exam (HLE) and BrowseComp by dramatically scaling multi-step reasoning depth maintaining stable tool-use across 200–300 sequential calls. |

| deepseek-ai/DeepSeek-V3.2 | Deepseek | A LLM model that combines breakthrough efficiency with exceptional reasoning and tool-use performance. Powered by Sparse Attention and scalable RL post-training, it delivers premium long-context quality at reduced cost. Reports place it in the GPT-5 class with gold-medal wins in the 2025 IMO and IOI. |

Testing an AI Model

Before using any of our AI models in your project, you can perform real-time testing directly from the dashboard. This allows you to evaluate the model’s performance and ensure it meets your requirements.How to Test an AI Model

- Select a Model:

- Navigate to the Models tab.

- Select the model you want to test by clicking on it.

- Start Testing:

- On the AI model chat page, type your question or input into the centered text field.

-

Press your Enter key or the arrow icon to submit your query.

Your Daily Credits usage is shown above the request field. To view detailed model-specific usage information, visit the IO Intelligence Payments page.

Your Daily Credits usage is shown above the request field. To view detailed model-specific usage information, visit the IO Intelligence Payments page.

- Interact with the Model:

- The AI model will respond, starting a conversation. You can continue testing by asking additional questions or providing more input.

- Compare different models to find the one that best suits your needs.

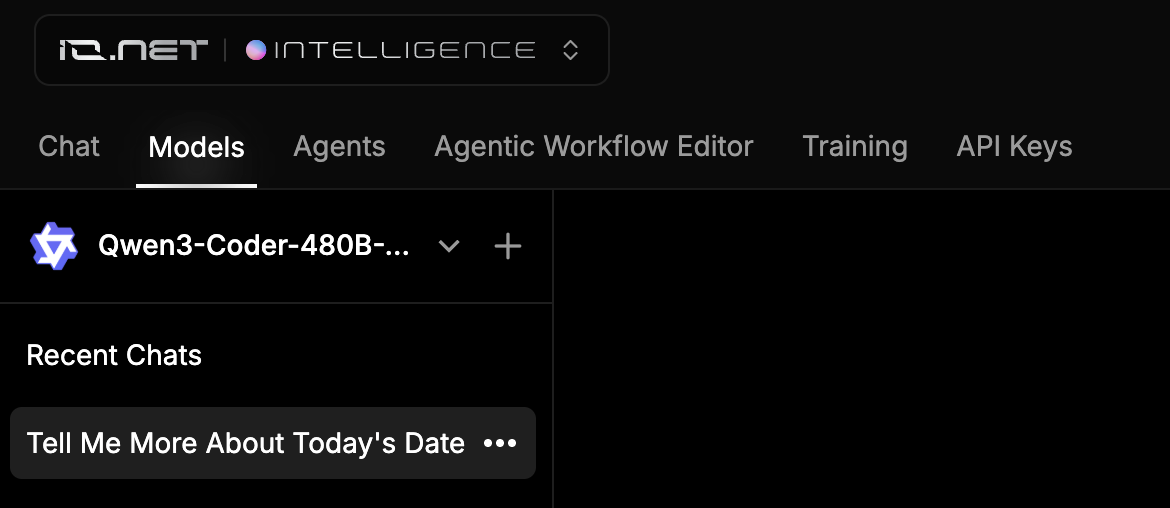

Managing Chats

- View Previous Chats:

- On the left, you can manage your previously created chats with different AI models.

- Click on any chat to dive deeper into the conversation.

- Create or Remove Chats:

- Create a new chat by clicking the + button next to the model name.

-

Remove a chat by clicking the three-dot menu and selecting Delete. Remove unnecessary chats to keep your workspace organized.

Switching Between Models

- Next to the + button is a dropdown menu showing the currently selected model.

-

Click the dropdown menu to select a different AI model and begin a new conversation with it.