Explore our Blog for

Latest News & Insights

Stay updated with the latest updates and new products. Discover what's happening around the io.net.

io.net blog

AI Is running in the dark. It's time to turn on the lights. Let’s say you have a truly innovative idea and the team to launch the next great AI project. But, when you sit down to get started you immediately hit a wall. The compute you need is controlled by a handful of hyperscalers. They limit access, set prices that are opaque and unaffordable, and force you into enterprise contracts designed for companies ten times your size. The decisions that are affecting the infra you need to succeed are

AI infrastructure was built for humans. And it comes with human barriers and limitations. Enterprise logins, KYC verification, approval workflows, and admin portals all require a person at the keyboard. Someone needs to sign up, authenticate, pay, and manage all of these services. And that someone has always been a human, until now. io.net is changing this. Agent Cloud uses an MCP library to remove the need for a human in the equation, marking a genuine turning point in how autonomous AI system

Latest

See All

Render Network has built a compelling reputation as the GPU solution for "creative-first" and "research-first" developers. With a decentralized marketplace for GPU compute, native support for Blender and Cinema 4D, and an expanding AI inference layer through its Dispersed subnet, Render Network is a strong fit for 3D artists, VFX studios, and AI/ML teams looking for cost-effective alternatives to centralized cloud providers. Render Network does this all without managing any raw compute infrastru

Over the recent months we have seen both AI providers and hyperscalers go offline for several hours. Production workflows stalled almost immediately. Customer service bots went dark, code pipelines froze, and engineering teams struggled to come up with emergency plans most of them hadn’t prepared for. Every time there is an outage with a compute provider or massive AI company, there is an important question that isn’t answered when the service comes back online: if these providers can't guara

Most developers don't fail at distributed GPU training because they select the wrong model architecture. On the contrary, they misstep when provisioning the wrong cluster and GPU mix, wrong interconnect topology, and wrong scaling strategy. To add insult to injury, they’ll burn $4,000 in three hours trying to figure what the heck went wrong. This quick guide exists so you can avoid this mess. When we published a GPU cluster quick-reference card on X earlier this quarter, it became one of our

18 production-ready AI agents for NLP, market data, & automation on io.intelligence. Consolidate your AI stack with one API.

Together AI is known for its reputation as the GPU solution for "research-first" developers. Featuring polished, serverless inference APIs and managed fine-tuning pipelines, Together AI is a good fit for AI/ML teams transitioning from open-source models to production endpoints, all without managing raw infrastructure. Whereas legacy hyperscalers focus on general-purpose compute and boutique clouds serve academics with SSH-and-go simplicity, Together AI is aimed at the technical mid-market. It h

Does this sound familiar? A new Web3 network launches. It issues tokens to attract early contributors. People pile in. The token price climbs. The project looks healthy. Then the market turns. Token price drops. Contributors turn away. And the network shrinks. Fewer contributors also means less utility, which means less demand, which means the price drops more. And this same pattern continues, until there's not much left beside a whitepaper and some ghost validators. io.net’s new tokenomics i

Your 2026 guide to building a purpose-built GPU cluster for AI. Includes TCO, vendor-agnostic benchmarks, hardware selection (H100/MI300X), and rollout plan.

Z.ai's GLM-4.7-Flash (30B MoE) is live on io.intelligence. Get the strongest 30B model for coding & reasoning with best-in-class performance-per-dollar.

Complete technical guide to decentralized compute: benchmarks, cost calculator, compliance checklist, and step-by-step migration from AWS/GCP.

Your 2026 guide to building a purpose-built GPU cluster for AI. Includes TCO, vendor-agnostic benchmarks, hardware selection (H100/MI300X), and rollout plan.

Complete technical guide to decentralized compute: benchmarks, cost calculator, compliance checklist, and step-by-step migration from AWS/GCP.

Learn what a GPU cluster is, how it differs from multi-GPU servers, and use our cost calculator to decide if you should build or rent one.

Discover io.net's Incentive Dynamic Engine (IDE): an adaptive tokenomics model bringing sustainable economics and predictable stability to decentralized GPU compute.

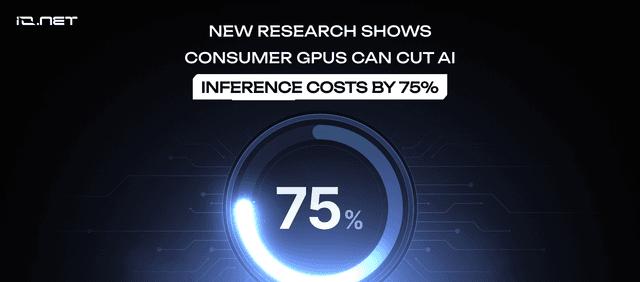

New io.net study shows consumer GPUs (RTX 4090) can cut AI inference costs by up to 75% for LLMs, enabling a sustainable, heterogeneous compute infrastructure.

Blockchain promised to solve centralization, but focused on wrong problems. DePIN networks like io.net finally deliver real value through affordable GPU access.

Latest By Topic (99)

See All

See how Leonardo.Ai scaled from 14K to 19M users and cut GPU costs by over 50% using io.net's high-performance, affordable compute solution for generative AI.

New io.net study shows consumer GPUs (RTX 4090) can cut AI inference costs by up to 75% for LLMs, enabling a sustainable, heterogeneous compute infrastructure.

KayOS, an AI startup, achieved 5x developer power with io.net. Learn how their 2-person team cut compute costs by 60% ($2.5k to $1k/month) using io.intelligence.

![AI Training vs Inference: Key Differences, Costs & Use Cases [2025]](/_next/image?url=https%3A%2F%2Fstorage.ghost.io%2Fc%2F33%2F2c%2F332c3e6c-8dbe-4aa1-87fd-98e6cf4fe33a%2Fcontent%2Fimages%2F2025%2F11%2Fio-Blog-AI-Inference-vs-Training.png&w=640&q=75)

AI training teaches models to recognize patterns. AI inference applies those models to make predictions. Learn the differences, costs, and optimization strategies in io.net’s complete guide.

Complete comparison of GPU vs CPU for AI: deep learning performance, hardware cost, TCO, and ideal use cases. Choose the right processor for your training and inference workloads.

Wondera cut AI training costs 75% and scaled to 200,000 users in 4 months using io.net's decentralized GPU infrastructure, launching 3 months ahead of schedule.

Blockchain promised to solve centralization, but focused on wrong problems. DePIN networks like io.net finally deliver real value through affordable GPU access.

Unified Chat is the single, intelligent AI workspace that unifies every model and tool. Auto-routes for optimal quality and cost. End fragmentation.

Vistara Labs used io.net to scale its Zaara AI platform, building 5,600 apps in two months while cutting compute costs by 3x and achieving zero infrastructure failures.

Complete financial framework for GPU infrastructure decisions. Cost modeling, ROI analysis & budget optimization for AI companies.

io.net surpasses $20M in verifiable on-chain revenue, proving decentralized GPU infrastructure can compete with AWS and GCP on cost, performance, and real-world adoption.

Model deployment connects trained ML models to users, yet most stall due to cloud costs and vendor lock-ins. Decentralized platforms cut costs 90%.